|

Robust Underwater Structured Light using Neural Representations |

|

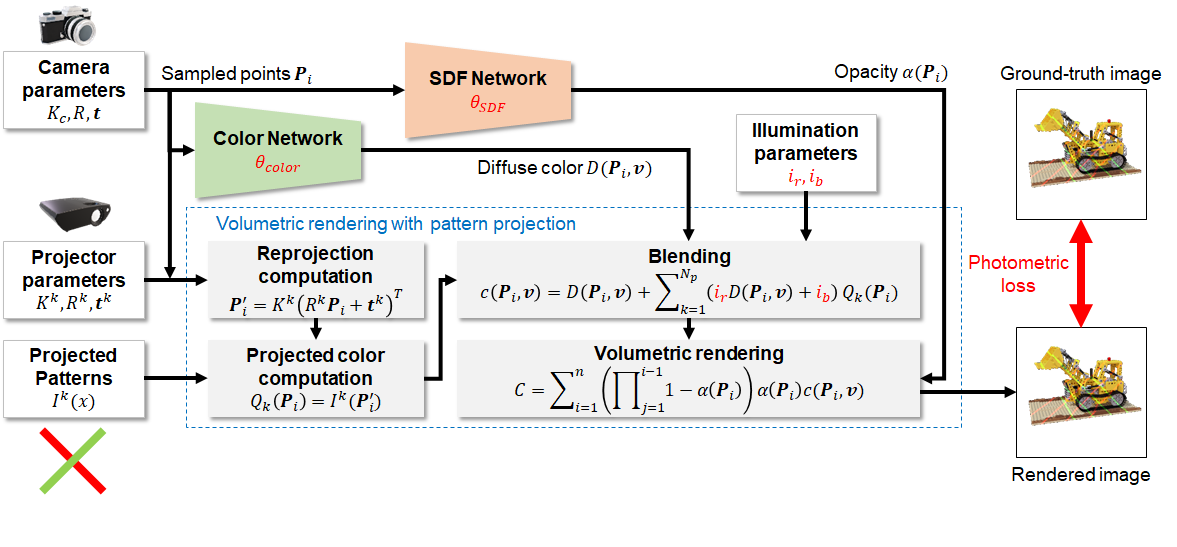

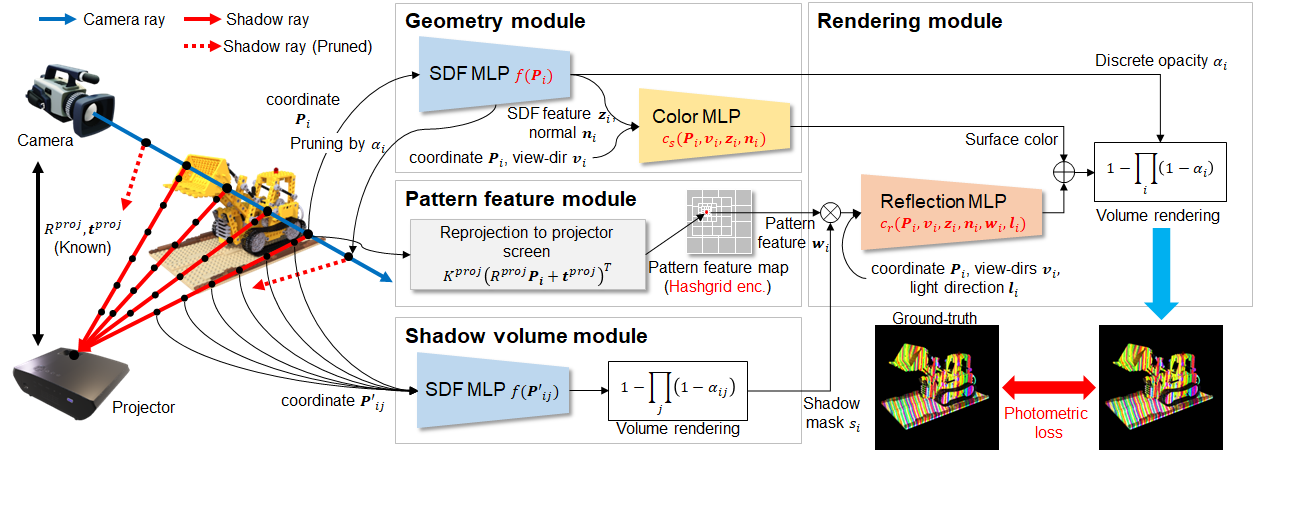

Structured Light is a technique that performs triangulation by projecting specific patterns from a projector and observing their distortion with a camera. However, in one-shot reconstruction within challenging environments—characterized by severe light attenuation, scattering, or dynamic scenes—the method often suffers from noise, resulting in sparse reconstruction or significantly degraded accuracy. By introducing the expressive power of "Neural Fields," a concept recently advanced in the field of novel view synthesis, we have achieved continuous and high-definition 3D reconstruction that is not constrained by discrete, pixel-wise geometry. [Method 1: Continuous Shape Reconstruction using Neural SDF (ActiveNeuS)] Instead of traditional voxel or mesh representations, we define the object's shape as a "Neural Signed Distance Field (Neural SDF)" approximated by a neural network. In this algorithm, we have developed "ActiveNeuS," which optimizes the shape by reprojecting 3D points sampled along rays $r(t) = o + t d$ from the camera into the projector's coordinate system, and minimizing the photometric error between the expected intensity from the projection pattern and the observed camera image. The primary advantage of this method lies in its ability to perform reconstruction with sub-pixel accuracy, independent of pixel resolution, by learning the space as a continuous function. This enables the precise interpolation and reconstruction of smooth, hole-free surfaces even from low-resolution data that would be lost to noise in traditional point-correspondence-based methods.

Rendering pipeline of ActiveNeuS

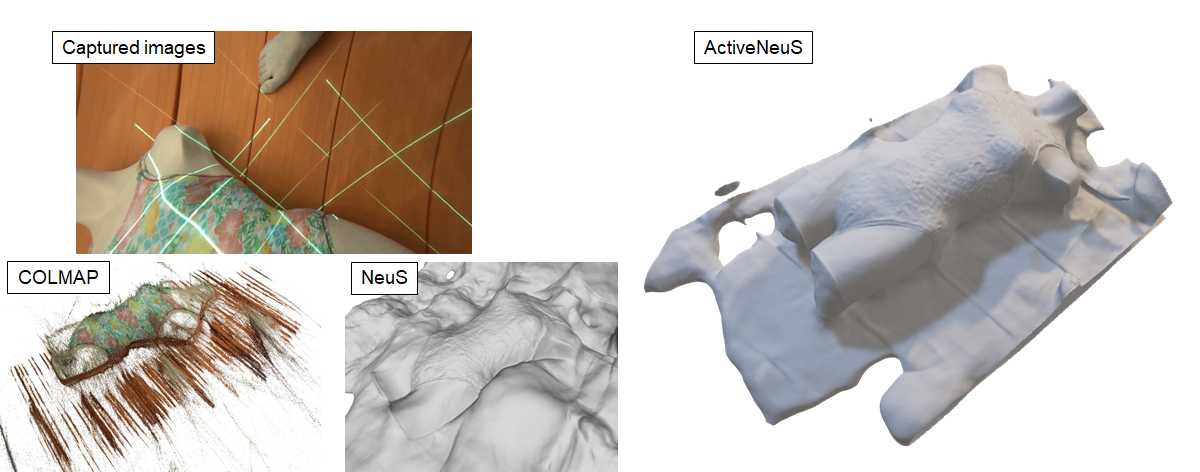

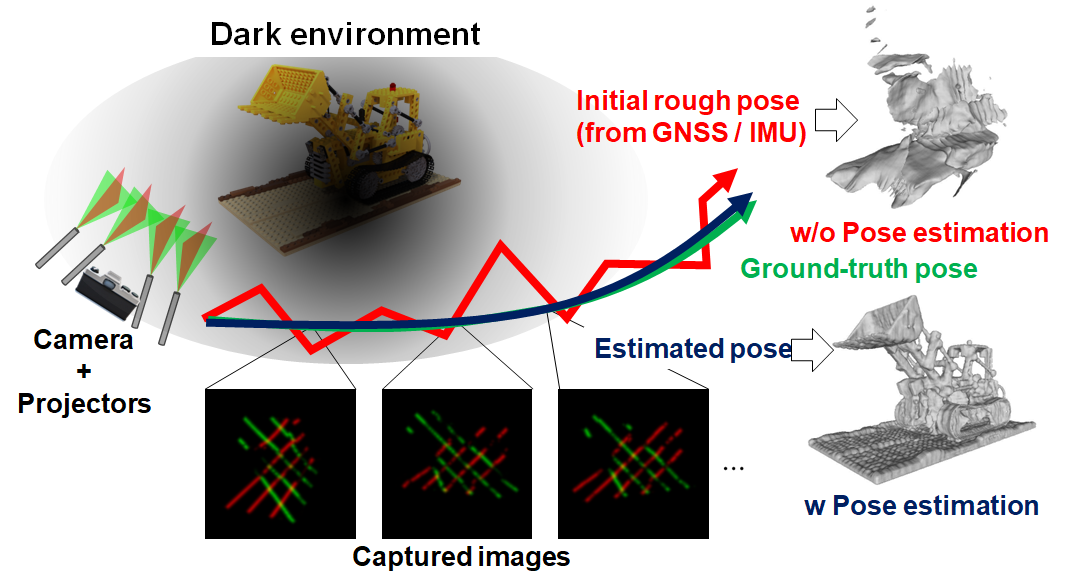

Comparison of reconstruction accuracy with conventional methods [Method 2: Active-SfM Leveraging Temporal Variations of Projection Patterns] For environments with sparse texture where self-localization (SLAM/SfM) is difficult, we developed "Active-SfM," which incorporates temporal changes in projection patterns as geometric constraints. This algorithm simultaneously optimizes "Shape (SDF)" and "Camera Pose $\{R, t\}$" via a differentiable renderer, achieving high-precision pose estimation by mathematically analyzing how the fixed pattern projected from the projector "slides" across the object's surface as the camera moves. This allows for millimeter-precision self-localization even in dark or featureless environments where image features like SIFT cannot be extracted. Its exceptional robustness enables stable, large-scale 3D mapping in extreme scenes where conventional methods completely fail.

Concept of pose optimization using projection patterns

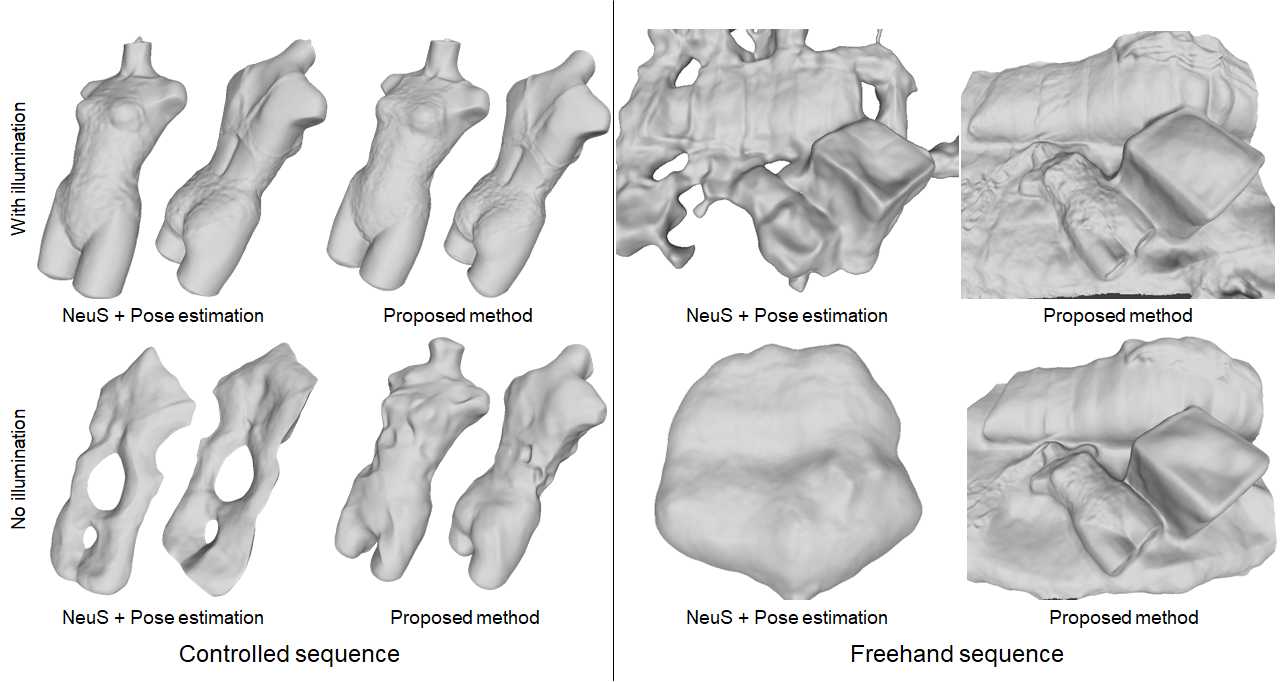

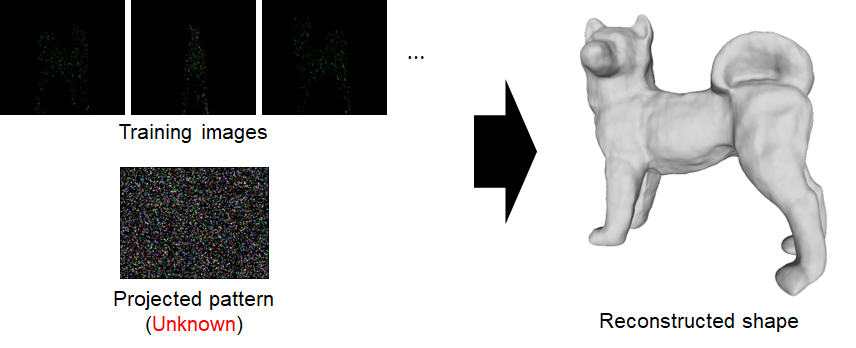

Comparison of reconstruction accuracy with conventional methods [Method 3: Self-Supervised Learning of Projection Patterns (Unsupervised SL)] To handle real-world situations where the projection pattern is unknown or distorted due to lens refraction or hardware misalignment, we proposed "Unsupervised Structured Light," which simultaneously learns the shape and the radiance distribution of the pattern. This mechanism optimizes both shape and pattern by networking the pattern's intensity distribution using Multi-resolution Hash Encoding and integrating Shadow Volume Rendering to explicitly handle occlusions. The greatest merit of this approach is its flexibility, requiring no prior information about the pattern. Even if the projection system becomes misaligned due to hardware impact or environmental changes, the system adaptively derives the correct radiance pattern and geometry solely from on-site observation data, dramatically improving practical robustness.

Pipeline of Unsupervised SL

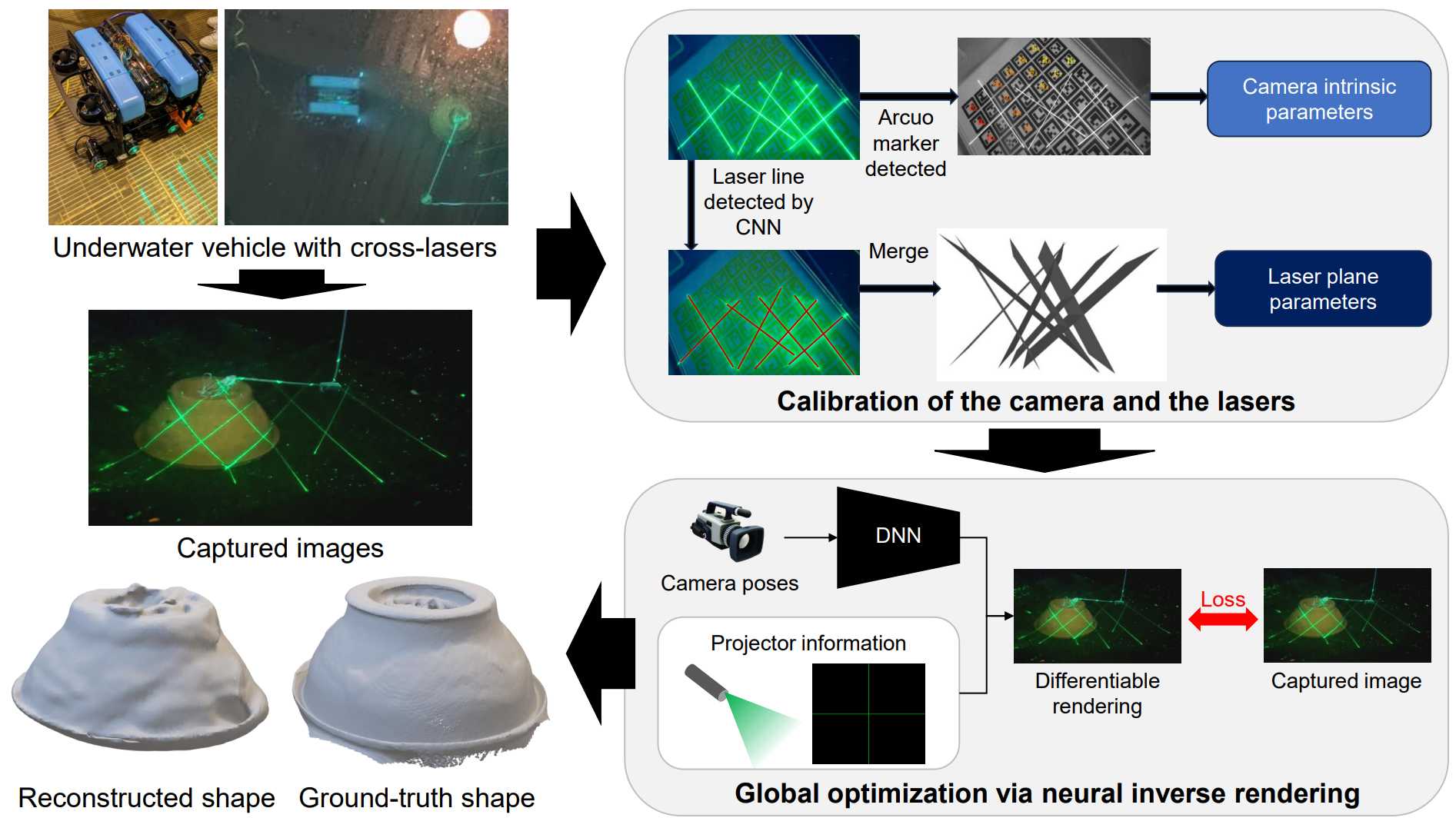

Example of reconstruction using Unsupervised SL [Evaluation and Outlook in Real Underwater Environments] We implemented the developed framework on an actual Remotely Operated Vehicle (ROV) and conducted validations in both water tanks and real sea areas. In addition to the three methods mentioned above, we achieved further robustness by incorporating "refraction-aware ray sampling"—which physically corrects for light refraction in water—and a module that estimates the initial ROV pose using semantic information collected by the Segment Anything Model (SAM). As a result, we demonstrated accuracy that surpasses conventional structured light methods even in underwater conditions with turbidity and complex reflections. By leveraging the strengths of neural representations that do not rely on pixel-wise geometry, this achievement serves as a powerful foundational technology for the autonomous operation of robots in extreme environments, such as automated inspection of underwater facilities and resource exploration.

Evaluation in actual underwater environments using an ROV Publications

Code

|

| Computer Vision and Graphics Laboratory |