|

Development of 3D Endoscope System Based on Active Stereo |

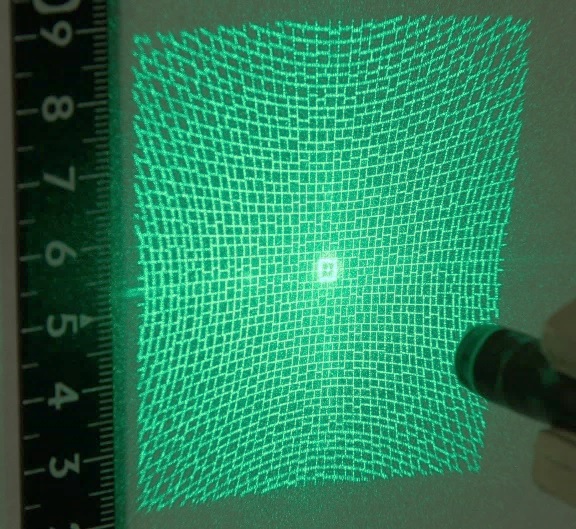

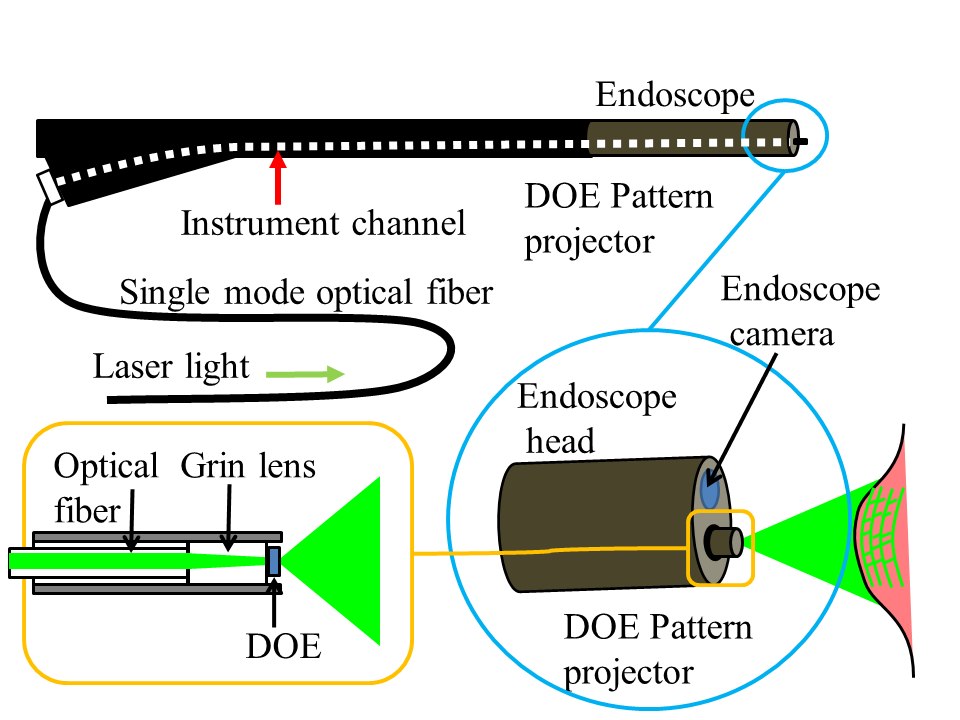

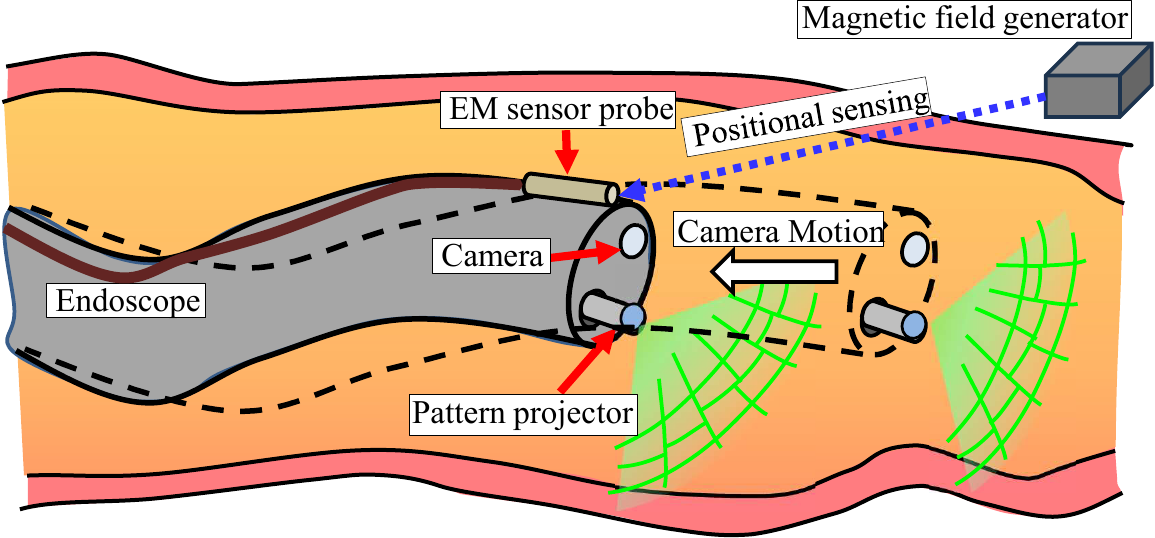

OverviewMeasurement of tumor sizes in medicine is important for determining treatment policies. However, in many cases, such measurements rely on visual estimation by physicians, which tends to introduce errors due to individual differences. Objective measurement of tumor shapes using endoscopic devices would represent a significant step toward solving this problem. Furthermore, 3D measurement with endoscopes is becoming increasingly important for surgical robots and for training data in medical AI applications. We are developing a 3D endoscope system based on active stereo. A compact pattern projector is inserted into the instrument channel of the endoscope, and images are captured by the endoscopic camera while a structured-light pattern is projected onto the target surface. Correspondences between the captured image and the projected pattern are estimated through image processing, and 3D measurement is performed by triangulation. Pattern Projector: Wide-Angle Micro Projector Using DOESince the measurement targets are biological tissues, projected patterns can become blurred due to subsurface scattering within tissues. In addition, noise and disturbances are more significant in the endoscopic environment than in ordinary camera environments. To address these issues, we developed a gap-coded grid pattern that modulates grid points by the gaps between grid lines, and a compact pattern projector that can sharply project patterns using a Diffractive Optical Element (DOE). The projector achieves a wide angle of view of approximately 90 degrees, enabling wide-area coverage. By inserting the projector into the instrument channel, endoscopic imaging can be performed while projecting the pattern.

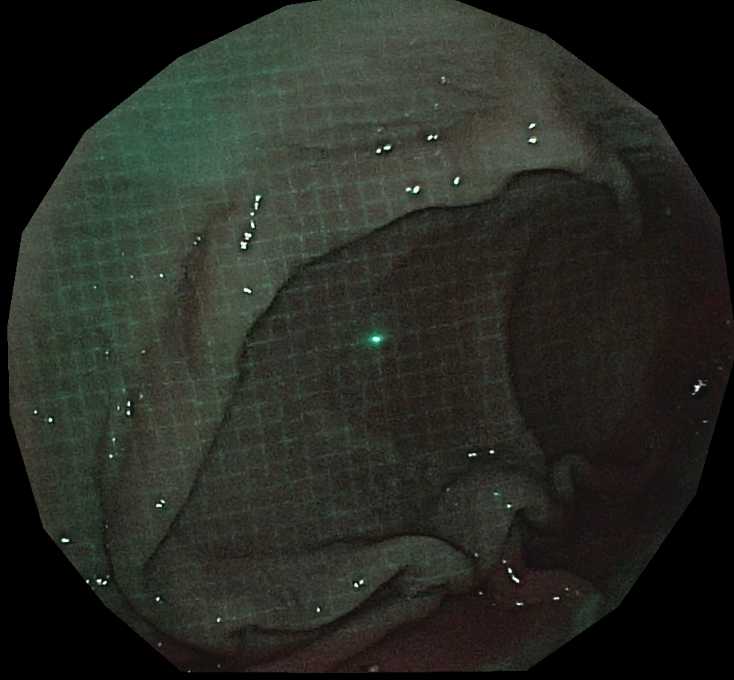

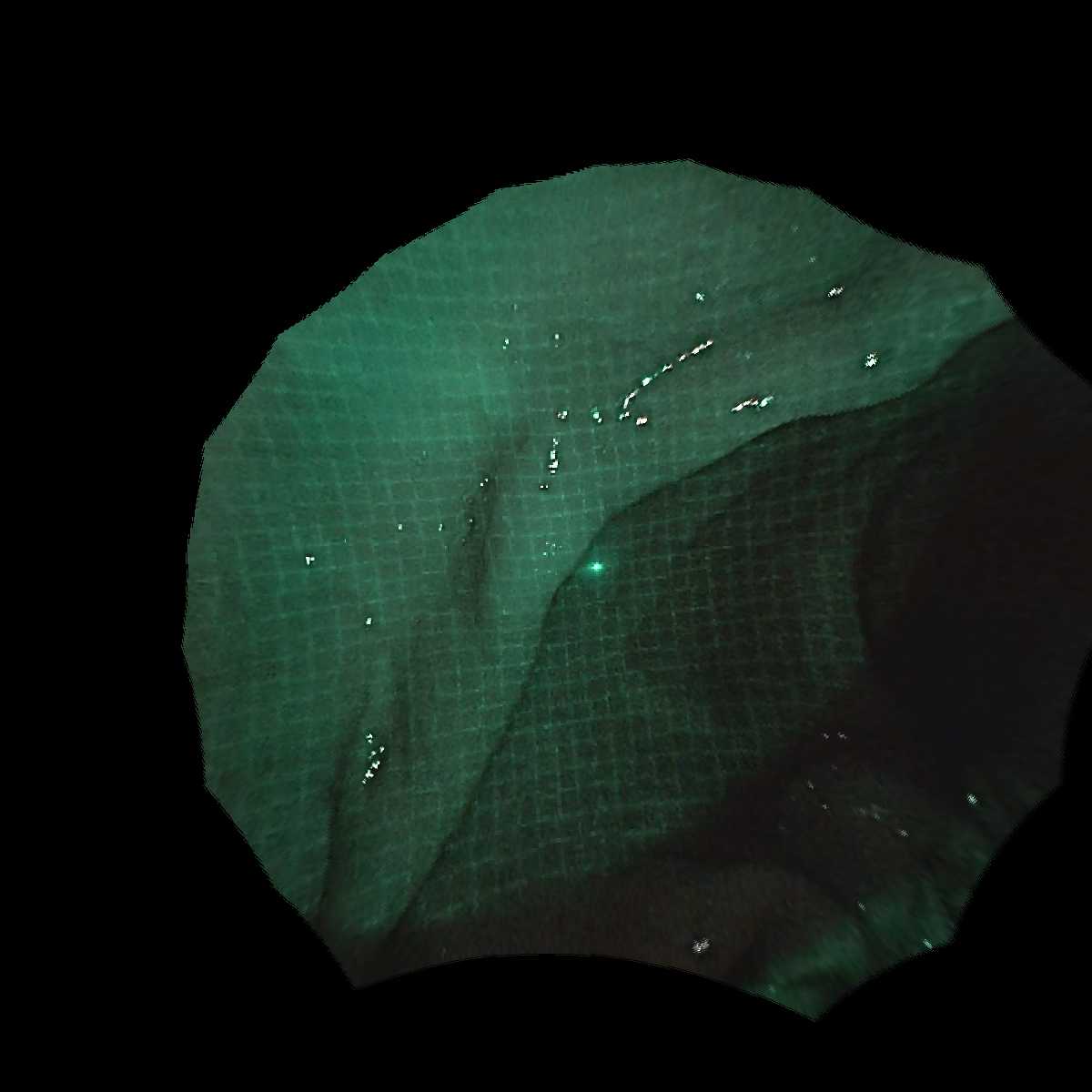

(Left) Projection pattern by the wide-angle micro projector (approx. 90-degree angle of view) [From Furukawa et al., Healthcare Technology Letters, 2025]. (Right) Endoscopic image with pattern projected (inner wall of pig stomach) Decoding of Projected Patterns by Deep Learning System configuration of the 3D endoscope The developed pattern is "decoded" by a deep learning model. This enables pixel-wise estimation of correspondences from the camera to the projector.

>

>

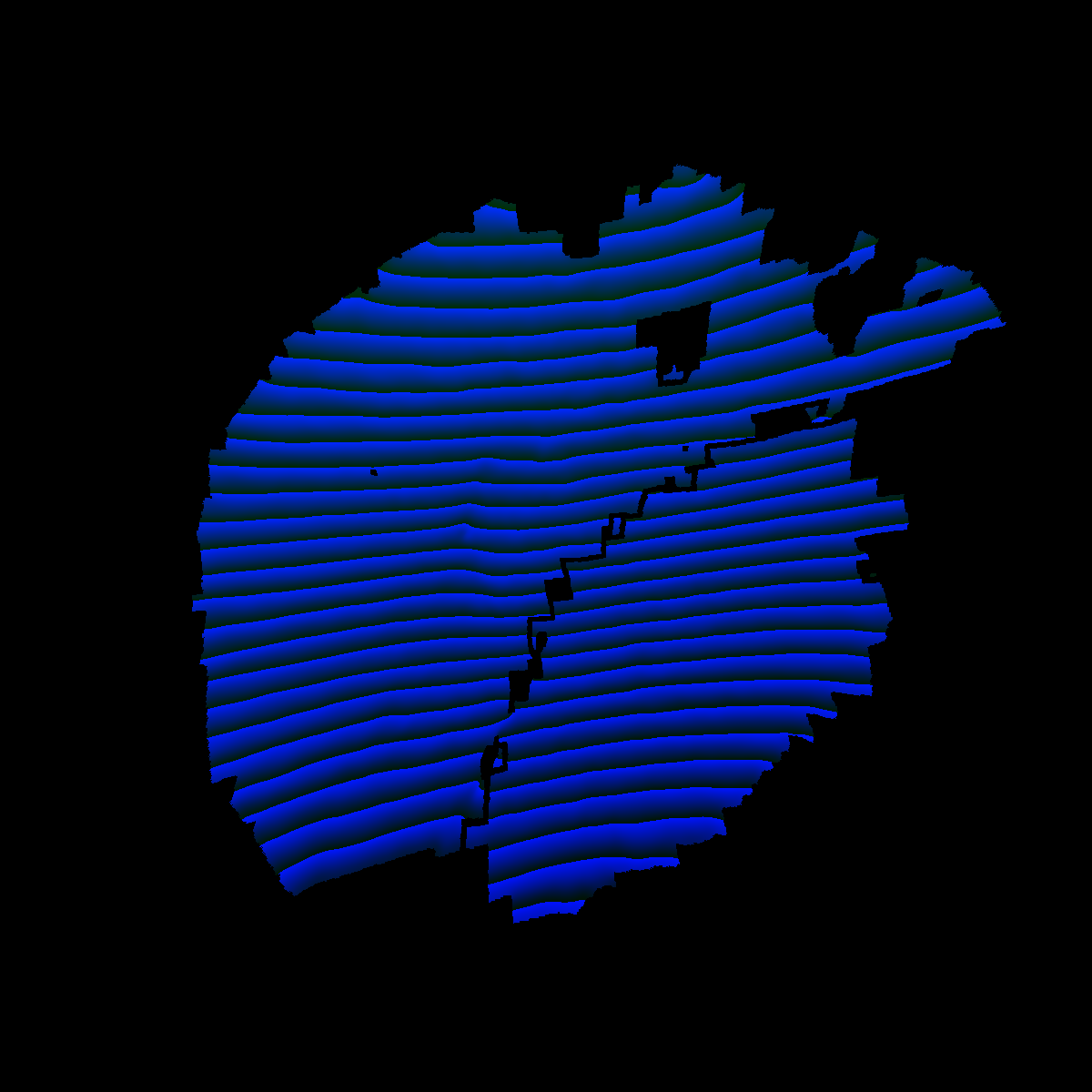

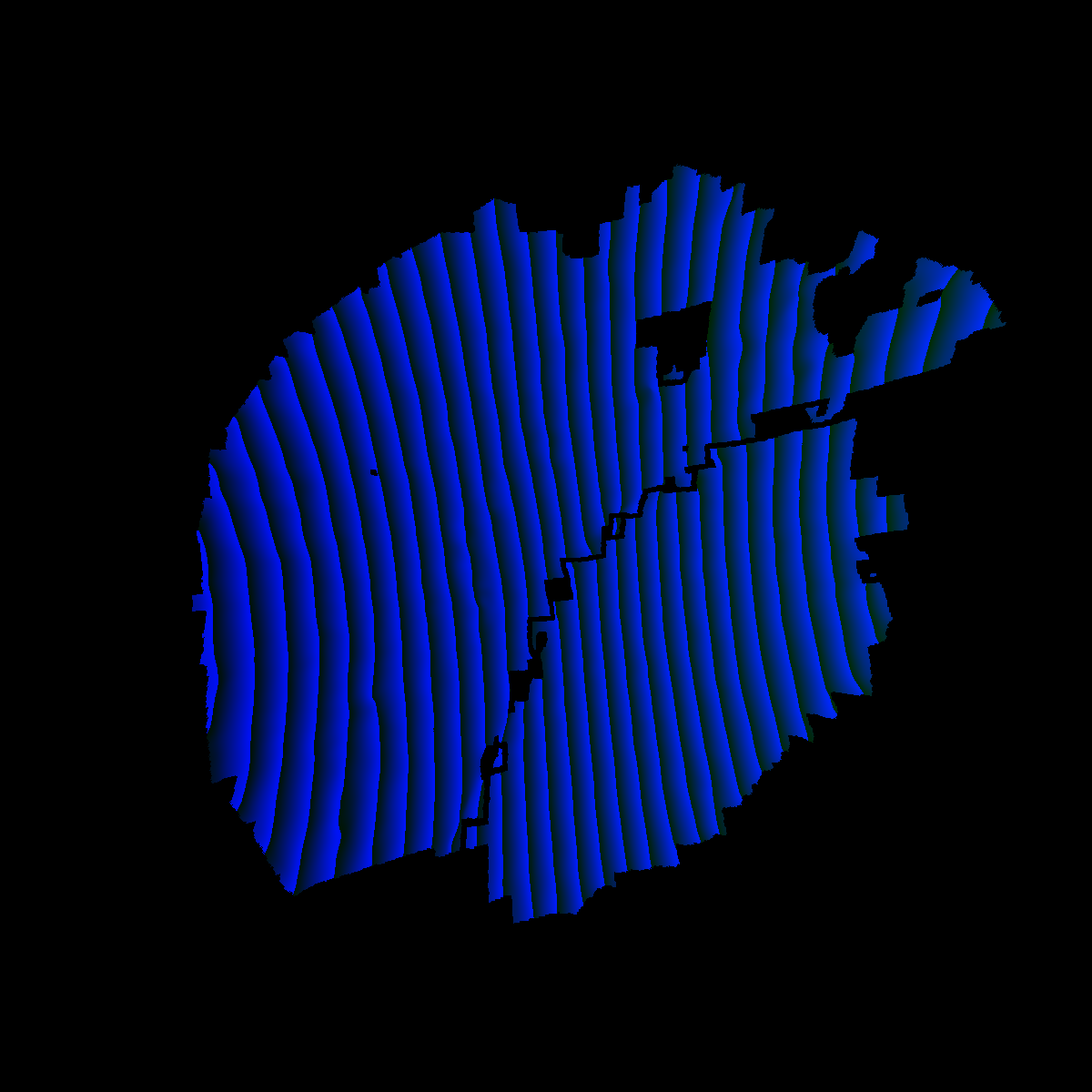

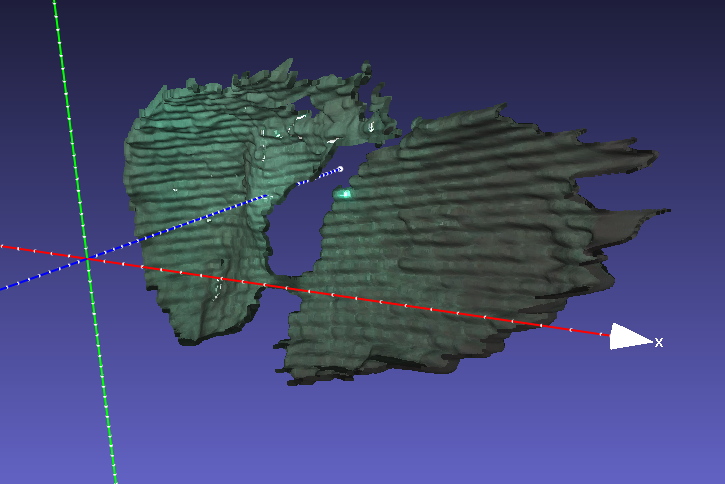

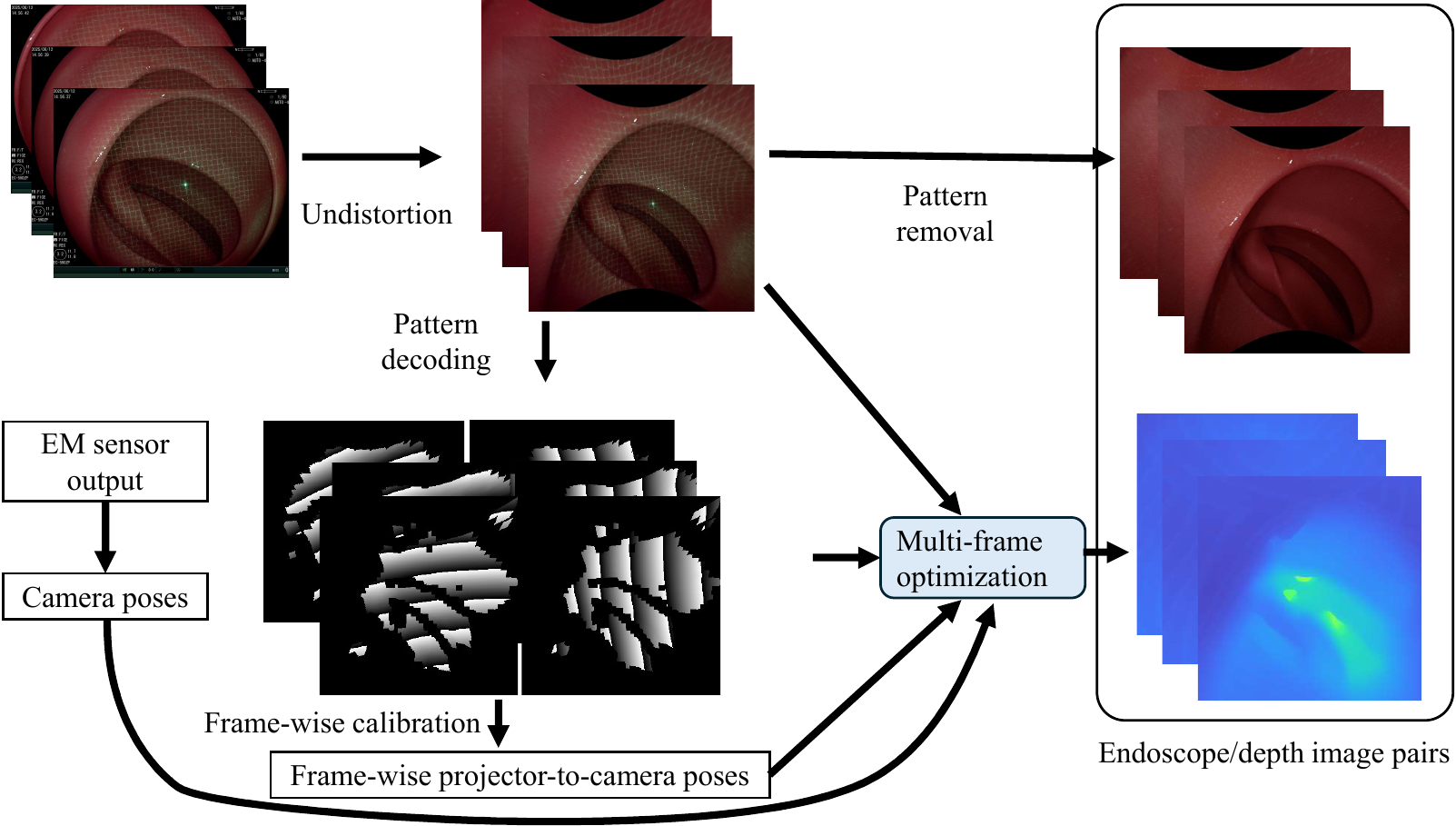

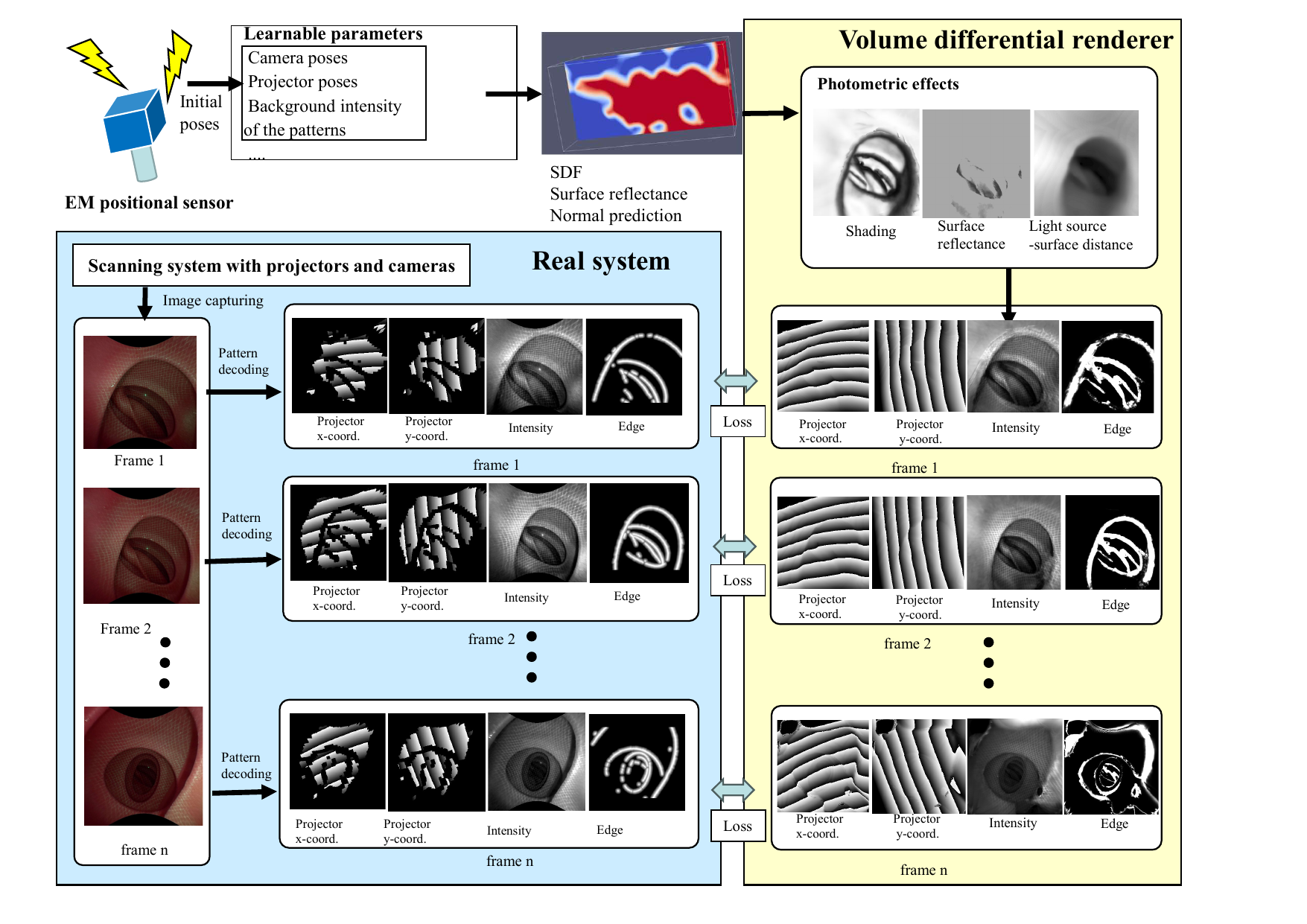

Measurement example of pig stomach: (from left) original image, decoded result [x coordinate], decoded result [y coordinate], single-frame shape Multi-Frame OptimizationFor 3D reconstruction from sequential images, we propose a method that integrates Neural Signed Distance Field (Neural-SDF) representation with structured-light (SL) projection to improve geometric consistency and accuracy across frames.  System combining an endoscope, a wide-angle micro pattern projector (inserted via instrument channel), and an EM sensor probe. The EM sensor outputs poses with respect to a magnetic field generator. [From Furukawa et al., Healthcare Technology Letters, 2025] The processing flow is shown below. For each frame, the gap-coded pattern is decoded to obtain a pixel-wise correspondence map, and per-frame calibration (estimation of camera and projector poses) is performed. Then, a joint optimization over all frames is carried out to simultaneously refine camera poses, projector poses, and surface geometry.  Processing flow: gap-coded pattern decoding to obtain correspondence maps (visualized modulo 256), per-frame calibration, and all-frame joint shape optimization. [From Furukawa et al., Healthcare Technology Letters, 2025] Per-frame shape reconstruction alone suffers from shape gaps caused by decoding errors, and scale or geometric inconsistencies across frames. In the all-frame joint optimization, a pattern projection image and a camera-projector correspondence map are rendered on the neural surface and compared with the captured image and decoded result. The overall shape, camera positions, and projector positions are optimized so that these rendered outputs match the observations, yielding geometrically consistent depth images and 3D shapes across all frames.  All-frame joint optimization: the pattern projection image and camera-projector correspondence map are rendered on the neural surface and compared with captured images and decoded results. Overall shape, camera positions, and projector positions are optimized to minimize the discrepancy. [From Furukawa et al., Healthcare Technology Letters, 2025] Examples of Multi-Frame Optimization ResultsThe following videos show geometrically consistent depth images and 3D shape reconstruction results obtained by all-frame joint optimization for multiple in-vivo samples.

Publications

|

| Computer Vision and Graphics Laboratory |